Most B2B leaders who’ve tested what AI says about their company walked away with a feeling, not a finding. Maybe a pit in their stomach. Maybe a shrug. But they certainly didn’t walk away with a framework for understanding what it means for their pipeline.

In Post 1 of this series, we named the risk: deals are being decided before your company enters the conversation, and your pipeline can’t detect the loss.

In Post 2, we traced the mechanism: AI synthesizes signals from four layers of your market presence, and when those signals are fragmented, the composite it produces is muddled, averaged-out, and stripped of the things that make your company distinct.

The stakes of that fragmentation are getting harder to ignore. According to Dreamdata’s 2026 LinkedIn Ads Benchmarks Report, the average B2B buying journey now stretches to 272 days. 81% of that journey happens before a prospect ever enters your sales pipeline. Buyers are spending roughly seven months forming preferences, building internal consensus, and constructing shortlists through self-education.

A growing share of that self-education now flows through AI systems. If you can’t measure how AI represents you during those 220 invisible days, you’re flying blind during the phase that increasingly determines whether you make the shortlist at all.

The logical next question: how do you actually assess where you stand?

That’s what this post is for. AI visibility isn’t a vague, unmeasurable concern. It’s scorable across three distinct dimensions. And a structured AI visibility assessment reveals risks that no dashboard, SEO audit, or pipeline report can detect.

Three Dimensions of AI Market Presence

When we assess how AI platforms perceive a company, we evaluate across three dimensions. Each answers a different question. Each reveals a different category of risk.

Visibility: Does AI surface you when it matters?

This is the most fundamental dimension, but most leaders test it the wrong way.

They type their company name into ChatGPT, see a recognizable description, and breathe a sigh of relief. That’s aided visibility, and for established B2B companies, it’s essentially table stakes. Unless there’s brand name confusion, AI will describe you if someone asks about you by name.

The test that actually matters is unaided visibility: when a buyer queries a category, describes a problem, or asks for a shortlist of vendors for a specific use case, does your company appear?

Across how many platforms? At what stages of the buyer’s journey? Consistently, or only when prompted in narrow, specific ways?

The gap between aided and unaided visibility is where most mid-market companies carry a false sense of security. AI knows you exist. But it may not mention you when buyers are actively researching solutions.

For companies competing against larger or more digitally established players, that gap is often the widest, and it’s the most consequential for pipeline.

Accuracy: Does AI describe you correctly?

Showing up matters. Showing up with the wrong story can be worse than not showing up at all.

Accuracy assesses whether AI’s description of your company reflects your current positioning, capabilities, and value proposition. This is where outdated messaging, inconsistent third-party profiles, and forgotten directory listings do the most damage.

AI doesn’t distinguish between your 2026 homepage and your 2023 product page. It synthesizes whatever it finds and produces a composite. If those inputs tell conflicting stories, the output reflects that confusion, and the AI tools surface the company whose story is clear.

The most dangerous version of inaccuracy is what we call an identity crisis: when different AI platforms describe your company in fundamentally different ways. One says you’re a consulting firm. Another says you’re a software company. A third focuses on a product line you sunset two years ago.

That level of fragmentation doesn’t just confuse buyers. It signals to AI systems that your company’s narrative is unreliable, which erodes the other two dimensions.

Credibility: Does AI recommend you over alternatives?

This is the dimension that connects directly to pipeline.

Credibility measures whether AI treats your company as a standout in your category — differentiated enough to recommend, authoritative enough to trust. It’s the combination of two forces that are difficult to separate in practice: does AI see a clear “why you?” and does it regard you as a credible source of expertise?

Differentiation without authority is a claim nobody’s validated. Authority without differentiation is expertise that doesn’t help the buyer choose. What drives AI recommendations is the combination — whether AI has enough signal to credibly distinguish you from alternatives when a buyer asks for help deciding.

This dimension is shaped by the depth and consistency of your thought leadership, the clarity of your positioning relative to competitors, the specificity of your use-case content, and whether third-party sources corroborate what you say about yourself.

Companies that publish consistently on a focused set of topics, with a distinctive point of view, build strong credibility signals. Companies with scattered content, thin positioning, or a thought leadership presence that went quiet for a year get treated as participants, not leaders.

What the AEO/GEO Conversation Gets Right (And What It Misses)

If you’ve been following the broader marketing conversation around AI visibility, you’ve probably encountered the terms Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO). The labels differ slightly in emphasis, but the practice is the same: optimizing your presence for AI-driven discovery.

A growing ecosystem of tools from companies like Semrush, Ahrefs, Profound, and others now offer metrics like share of voice in AI responses, citation frequency, and AI visibility scores.

Recent research from Notified, analyzing over 200,000 press releases and 13 million AI citations, found that structural signals (headline clarity, metadata completeness, semantic organization) predicted citation performance with a 0.82 correlation. A mid-market B2B company outperformed a nationally recognized retailer by 3.4x in AI citations based on content structure alone. Even at the individual content level, how you’re built matters more than how big you are.

These are all useful, execution-layer metrics. They measure outputs.

But before you can improve any of those outputs, you need to understand why AI represents you the way it does.

Share of voice tells you how often you appear. It doesn’t tell you whether what AI says about you is accurate, differentiated, or structurally grounded.

Citation frequency tells you how often AI references your content. It doesn’t tell you whether the content it’s referencing reflects your actual positioning or an outdated version of it.

The three-dimension framework we use (visibility, accuracy, credibility) is diagnostic, not performative. It’s designed to surface why the numbers look the way they do, so that when you do optimize, you’re optimizing from a foundation of clarity rather than guessing at which levers to pull.

This isn’t a knock on AEO. The tools and metrics emerging in that space are genuinely valuable for teams that know what they’re optimizing toward. The issue is sequence.

For most mid-market companies, jumping into optimization before diagnosis is the same pattern that has burned growth teams in every previous channel.

The SEO Parallel Worth Learning From

There’s a comparison gaining traction right now between AEO and the early days of SEO, and it’s a useful one. Companies that invested in SEO early captured a compounding advantage that late movers struggled to close. The same dynamic is forming around AI visibility.

But the real lesson from SEO isn’t “start optimizing immediately.”

The companies that won at SEO over the long term were the ones with clear positioning, strong topical authority, and consistency (messaging, publishing cadence, etc.). The ones who chased tactics without those foundations eventually got penalized or plateaued.

Keyword stuffing worked until it didn’t. Link schemes worked until they didn’t. The companies that endured were the ones whose SEO performance was a byproduct of genuine clarity, not a substitute for it.

The same will be true here. AI systems are already sophisticated enough to distinguish between signal depth and signal volume. A company that publishes frequently but without a focused point of view doesn’t build authority. It builds noise.

What a Structured Assessment Actually Reveals

When we run an AI360 assessment for a company, the findings almost always surprise leadership. The patterns were there. They just had no way to see them.

Two dimensions tend to reveal the most about pipeline risk: visibility and credibility.

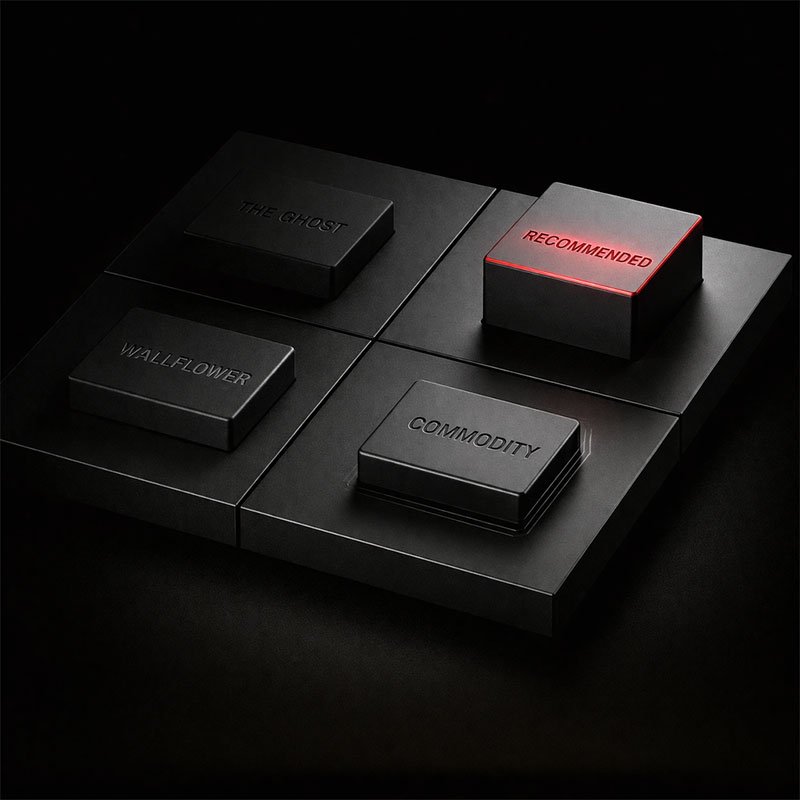

We’ve started mapping these on what we call the AI Recommendation Matrix. It’s a framework we’re actively developing as we run more AI visibility assessments, and it’s still evolving. But it’s been consistently useful for helping leadership teams see where they actually sit, and more importantly, what that position means for how AI is shaping their pipeline.

Most mid-market companies land in The Ghost or The Wallflower quadrants.

The Ghost is the most common position for established companies that have done real work on their positioning. Their story is clear. AI would describe them well if asked directly. But they don’t surface in unaided queries — the category-level, problem-driven, and comparison searches that shape buyer shortlists during those 220 invisible days. The deals they’d win never start because AI doesn’t think of them.

The Wallflower is the most exposed position: low on both axes, fully dependent on channels AI doesn’t touch. These companies are typically invisible to AI and undifferentiated in the signals AI can find. They’re operating on referrals, outbound, and legacy relationships, none of which scale as buyers shift to AI-mediated research.

The Commodity quadrant is deceptive. Being visible feels like progress, and it is. If AI is mentioning you in category discussions, you’re ahead of most mid-market companies. But visibility without differentiation is a fragile position. AI includes you on the list without giving buyers a reason to choose you.

Conversations that start from these recommendations tend to drift toward price, because the buyer was handed a shortlist with no clear favorite. And companies sitting at the edge of that shortlist, say 10th or 12th in a category rather than top five, are one competitor’s positioning improvement away from falling off entirely.

The Recommended is the target state: AI finds you in unaided queries and recommends you as a credible, differentiated option. This is the position where AI is actively working for your pipeline. It’s also the rarest quadrant among mid-market B2B companies today.

What complicates the picture further is that different AI platforms can place you in different quadrants. ChatGPT might recommend you for one use case, but you’re a Ghost for another. Claude’s training data houses messaging you retired a year ago while Perplexity, pulling from live search results, reflects something closer to your current story.

These cross-platform inconsistencies are invisible without a structured AI visibility assessment, and they’re a direct consequence of the signal fragmentation we traced in Post 2.

Why Traditional Metrics Miss This Entirely

A company can rank on the first page of Google for every target keyword and still be absent from AI recommendations. This surprises leaders who’ve invested heavily in SEO, but it makes sense once you understand how AI systems build their responses.

AI doesn’t just index web pages. It synthesizes across your entire market presence…

- What you say about yourself

- What third parties say about you

- What your thought leadership signals

- What’s missing from the conversation altogether.

A strong Google ranking means your content is findable by search algorithms. It doesn’t mean AI systems regard you as the answer to a buyer’s question.

The Dreamdata report reinforces why this matters. Their data shows that conversion events like form fills don’t meaningfully compress the buying timeline. The deal clock starts ticking when the buyer first engages with your brand, not when they fill out a form. Which means the moment that shapes the outcome is upstream of everything your dashboard measures. It’s the moment you enter the buyer’s consideration.

If that moment is increasingly shaped by AI, and your existing metrics can’t see it, your dashboard is measuring the last 19% of a journey that was decided in the first 81%.

This is the gap most marketing dashboards can’t surface. The metrics your team tracks today were designed for a world where discovery happened through search results, event attendance, and direct referrals. AI-mediated discovery operates on different inputs, produces different outputs, and leaves very little trace in your existing analytics.

Measuring Creates the Path Forward

If Posts 1 and 2 in this series surfaced discomfort, this post is meant to offer the first genuine relief. The anxiety of not knowing is worse than the findings themselves.

A structured AI visibility assessment converts that ambient anxiety into specific, prioritized findings you can actually act on:

- You learn which of the three dimensions are strong and which are exposed.

- You see where different AI platforms agree and where they diverge.

- You identify the specific signal layers from our framework that are contributing to the problem.

- And you get a concrete starting point rather than a vague sense that “something needs to change.”

That clarity is the prerequisite for any meaningful action. Without it, you’re guessing. And as the AEO space matures and more companies start investing in AI optimization, guessing puts you further behind every quarter.

What the Data Almost Always Points To

Measuring AI visibility is essential. But here’s what the assessment data reveals more often than not: the gaps don’t originate in your AI presence. They originate in your foundation.

An entire optimization industry is forming around AI visibility right now. It has frameworks, metrics, and playbooks. And for companies that haven’t diagnosed why AI gets them wrong in the first place, those tools will do exactly what every other tactic-first investment does: make the wrong signal louder.

That trap, and how to avoid it, is where we’re headed in Part 4.

Want to see where your company stands across all three dimensions?

Our AI360 assessment maps how major AI platforms perceive your company and gives you findings specific enough to act on.

Book a 30-minute conversation and we’ll run the AI visibility assessment for free.